For the first time in enterprise software history, 400 million Office users can manually throttle how much compute their AI assistant burns on a single task. Microsoft just turned reasoning depth into a user preference, and most IT departments aren’t ready for what that means.

The News: 27 Features, One Fundamental Shift

Microsoft released 27 new features for Microsoft 365 Copilot between January 13-27, 2026. The headline addition: a Model Selector that lets users toggle between three reasoning modes—Auto (default), Quick Response, and Think Deeper—when using GPT-5.2 across Outlook, Word, Excel, PowerPoint, and Copilot Chat.

This isn’t a minor UI tweak. It’s the first time a mainstream productivity suite has exposed reasoning depth controls to end users. The GPT-5.2 integration, which began rolling out in December 2025, now offers two distinct operational modes: GPT-5.2 Thinking for complex analysis and strategic work, and GPT-5.2 Instant for everyday writing and quick translations.

The Model Selector is available on Android, Windows, iOS, Mac, and web as of January 27, 2026. Additional features span multi-agent workflows, expanded chat history, voice support in Copilot Chat, and deeper SharePoint integration. Microsoft has also wired GPT-5.2 into Work IQ, their cross-application intelligence layer that pulls context from meetings, emails, and documents.

Why This Matters: The Death of One-Size-Fits-All AI

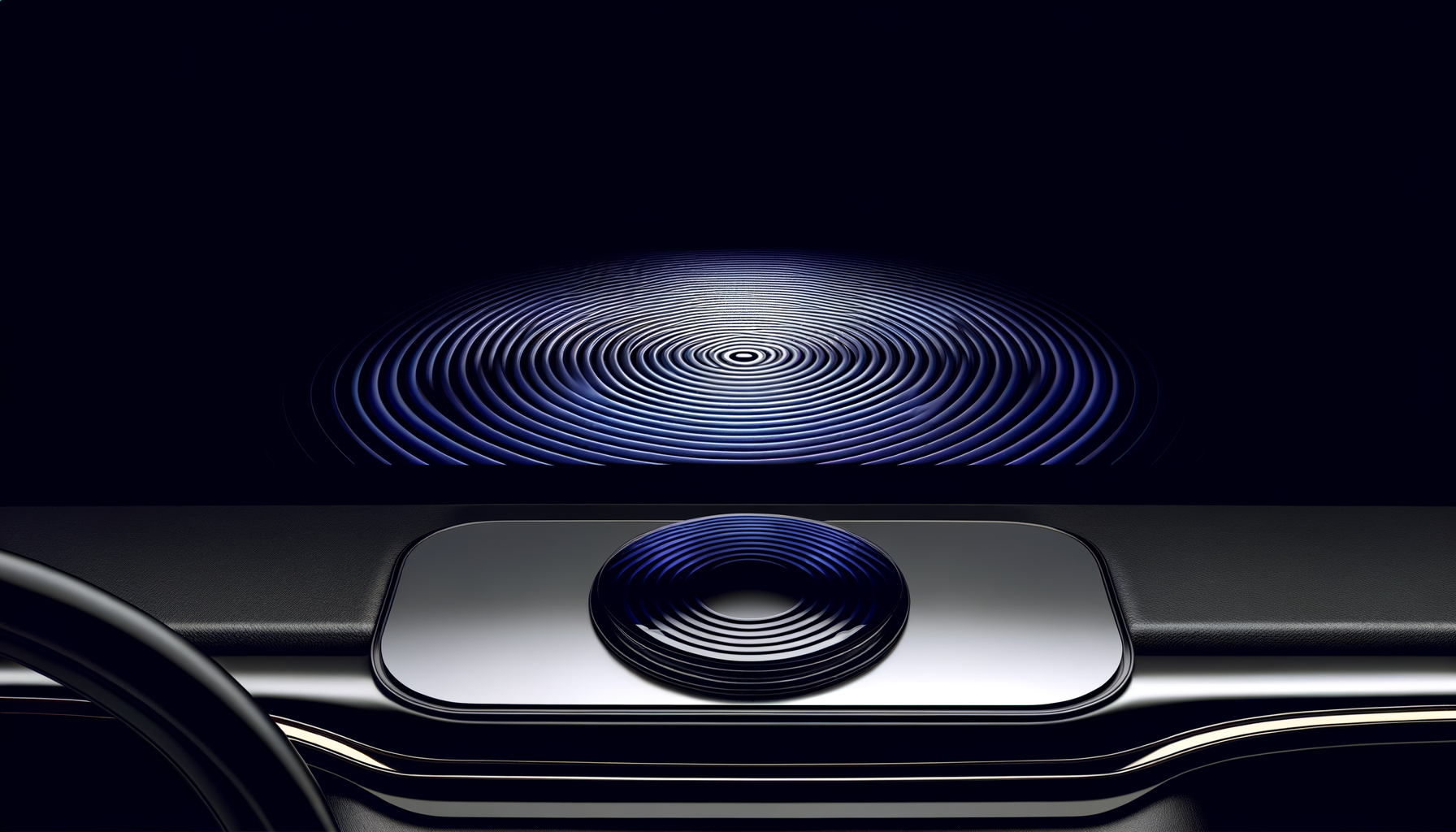

The Model Selector looks like a simple dropdown menu. Underneath, it represents a fundamental renegotiation of how humans and AI systems share cognitive load.

For power users, this is a productivity multiplier hidden in plain sight. A financial analyst building a quarterly model can now tell Copilot to “Think Deeper” on a complex forecasting problem—dedicating more inference compute and reasoning steps to the task—then flip to “Quick Response” for routine email summaries. Same tool, radically different output depth.

For IT departments, this creates a new cost variable. Think Deeper mode almost certainly consumes more tokens and compute per request. Microsoft hasn’t disclosed per-mode pricing, but the economics of inference suggest that heavy Think Deeper usage across an enterprise could meaningfully impact licensing ROI calculations.

The 5% of users who learn to context-switch between reasoning modes will outproduce their peers by margins we haven’t seen since the spreadsheet replaced the ledger.

The organizational implications run deeper. When reasoning depth becomes a user choice, you’re effectively distributing AI compute budget decisions to individual contributors. A junior analyst with good instincts about when problems require deep reasoning will extract more value from the same Copilot license than a senior VP who leaves everything on Auto.

Winners and Losers

Winners: Knowledge workers in analysis-heavy roles—strategy consultants, financial planners, legal reviewers, research scientists. Anyone whose work alternates between quick correspondence and deep analytical problems. They now have an AI that can match their cognitive gear-shifting.

Losers: IT budget planners who assumed flat per-user AI costs. Training departments that built Copilot curricula around single-mode operation. Competitors (Google Workspace, Notion AI, Slack AI) who now face pressure to expose similar controls or explain why they don’t.

The asymmetry matters: organizations that train employees to use reasoning modes strategically will compound advantages over those that don’t. This isn’t speculation—it’s the same pattern we saw with Excel power users in the 1990s and SQL-literate analysts in the 2010s.

Technical Depth: What’s Actually Happening Under the Hood

Microsoft’s implementation reveals how modern inference orchestration works at enterprise scale. Let’s break down the three modes:

Auto mode uses a classifier to route requests based on detected task complexity. Write a quick reply? Route to GPT-5.2 Instant. Analyze a multi-sheet Excel workbook with conditional logic? Escalate to GPT-5.2 Thinking. The classifier presumably examines prompt length, detected entities, application context, and historical patterns from Work IQ.

Quick Response mode pins requests to GPT-5.2 Instant regardless of complexity signals. This mode optimizes for latency over depth. Early reports suggest response times under 2 seconds for most queries. Useful when you need “good enough” fast—drafting meeting agendas, translating short passages, summarizing email threads.

Think Deeper mode engages GPT-5.2 Thinking, which appears to implement extended chain-of-thought reasoning. Based on OpenAI’s published architectures, this likely means: longer context windows, more reasoning tokens allocated before output generation, and possibly iterative self-verification steps. Response latency increases—early users report 8-15 seconds for complex analytical queries—but output quality on reasoning-intensive tasks improves substantially.

The Work IQ Integration

The January 2026 updates wire GPT-5.2 into Work IQ, Microsoft’s cross-application context layer. This is where things get architecturally interesting.

Work IQ maintains a unified index across your Microsoft 365 surface area: emails, calendar events, documents, Teams chats, meeting transcripts. When you invoke Think Deeper mode in Word, the model doesn’t just see your current document—it can pull relevant context from a meeting you had last Tuesday, an email thread from your legal team, and a spreadsheet your CFO shared.

The reasoning mode selector becomes more powerful with richer context. Think Deeper + Work IQ means the model can perform multi-hop reasoning across your entire work graph. “Summarize the project status” transforms from a single-document task to a cross-artifact synthesis spanning weeks of activity.

For enterprise architects, this has implications for data governance. Every document, email, and chat that feeds Work IQ becomes potential input to AI reasoning chains. If your organization hasn’t audited what’s in the Work IQ index, Think Deeper mode might surface information in unexpected ways.

Multi-Agent Workflows: The Sleeper Feature

Buried in the 27 features is expanded support for Agent Mode, which enables multi-agent workflows across Microsoft 365 applications. This deserves more attention than it’s getting.

Agent Mode allows Copilot to spawn specialized sub-agents for different tasks, coordinate their outputs, and synthesize results. Combined with the reasoning mode selector, you can now specify that certain agents operate in Think Deeper mode while others use Quick Response.

Example workflow: You ask Copilot to prepare a board presentation. It spawns an agent to extract financial data from Excel (Quick Response—structured data retrieval), another to analyze competitive positioning from your strategy documents (Think Deeper—analytical reasoning), and a third to draft slide narratives (Quick Response—templated generation). The orchestrating agent assembles outputs into a coherent deck.

This is early-stage infrastructure for AI teams, not just AI tools. The organizations that learn to design effective multi-agent workflows—specifying which subtasks benefit from deep reasoning versus quick execution—will operate with fundamentally different capabilities than those using Copilot as a glorified autocomplete.

The Contrarian Take: What Most Coverage Gets Wrong

The tech press is treating the Model Selector as a power-user feature. That framing misses the point entirely.

This isn’t about giving users control. It’s about Microsoft offloading the hardest problem in AI UX to end users.

Determining optimal reasoning depth for arbitrary tasks is genuinely difficult. OpenAI, Anthropic, and Google have invested heavily in auto-classification systems precisely because users can’t reliably estimate how much compute their task requires. By exposing a manual override, Microsoft is implicitly admitting their Auto classifier isn’t good enough—and betting that users will blame themselves for poor results when they choose wrong.

Every “Think Deeper” button is a confession that auto-classification remains unsolved. Microsoft just made it your problem.

This isn’t entirely cynical. Manual control does enable power users to extract value that auto-classification would miss. But the coverage treating this as unambiguous user empowerment ignores the failure modes: users who Think Deeper on simple tasks (wasting time and compute), users who Quick Response on complex tasks (getting shallow answers to deep questions), and organizations with no training infrastructure to help users develop mode-selection intuition.

What’s Overhyped

The “27 features” framing. Most of these are incremental improvements—expanded chat history, additional language support, minor UI refinements. The Model Selector and multi-agent workflow expansions are the only architecturally significant additions. Marketing counts features; engineers should count capabilities.

What’s Underhyped

The Work IQ + Think Deeper combination. Most coverage focuses on the selector itself, ignoring that deep reasoning over a unified work graph is qualitatively different from deep reasoning over a single document. This is where the productivity gains actually compound—and where the privacy implications get serious.

Also underhyped: the mobile parity. Having the full Model Selector on iOS and Android means knowledge workers can engage Think Deeper mode during commutes, between meetings, or while traveling. The productivity surface area for AI-augmented deep work just expanded beyond the desktop for the first time.

Practical Implications: What You Should Actually Do

If you’re a technical leader evaluating these updates, here’s what matters:

For CTOs and IT Leadership

Audit your Work IQ index before enabling Think Deeper broadly. The combination of deep reasoning and cross-application context means sensitive information can surface in unexpected outputs. Run test queries in Think Deeper mode across representative document sets and review what context gets pulled into responses.

Establish reasoning mode guidelines before users develop habits. Most users will default to Auto and never change it. The 10-15% who experiment need guidance on when Think Deeper actually helps versus when it just adds latency. Build internal documentation with task-type recommendations.

Model the compute cost implications. If Think Deeper mode consumes 3-5x the inference compute of Quick Response (a reasonable estimate based on chain-of-thought token expansion), your effective per-user cost varies dramatically based on usage patterns. Identify heavy Think Deeper users and determine whether their output justifies the compute delta.

For Engineers and Architects

Test multi-agent workflows with mixed reasoning modes. The Agent Mode expansions are the most architecturally interesting update. Build prototype workflows that combine Quick Response agents for data retrieval with Think Deeper agents for analysis. Measure where reasoning depth actually improves output quality versus where it just adds latency.

Evaluate API exposure for programmatic mode selection. Microsoft hasn’t announced public APIs for reasoning mode control, but enterprise agreements may include Graph API extensions. If you’re building internal tools that invoke Copilot, investigate whether you can specify reasoning mode programmatically based on task type.

Benchmark Think Deeper on your actual analytical workflows. The marketing examples (strategic analysis, complex forecasting) represent best cases. Test Think Deeper on your organization’s real analytical tasks and quantify the quality improvement versus Quick Response. Build data to inform mode selection guidelines.

For Individual Practitioners

Develop mode-switching intuition. Spend two weeks explicitly choosing modes instead of accepting Auto. Note which tasks feel appropriately matched, where you over-reasoned, and where you under-reasoned. Build personal heuristics for your work patterns.

Use Think Deeper for synthesis, Quick Response for extraction. As a starting heuristic: if you’re pulling specific information from documents (names, dates, figures), Quick Response is usually sufficient. If you’re generating analysis that combines multiple sources or requires judgment, Think Deeper typically justifies its latency cost.

Combine Think Deeper with explicit context loading. Before invoking Think Deeper on a complex problem, manually reference the relevant documents, emails, and data sources in your prompt. Don’t rely solely on Work IQ’s automatic context selection. Directed context + deep reasoning outperforms automatic context + deep reasoning in most analytical scenarios.

Forward Look: Where This Leads in 6-12 Months

The Model Selector is a transitional interface. Microsoft is training 400 million users to think about reasoning depth as a variable, which sets up several likely developments:

Automatic Mode Selection Gets Smarter—Then Invisible

Within 6 months, expect Microsoft to introduce “Adaptive Auto” that uses your mode-selection history to improve per-user classification. If you consistently override Auto to Think Deeper on financial analysis tasks, the system learns. By late 2026, manual mode selection becomes a fallback rather than a regular choice for sophisticated users.

Reasoning Depth Becomes a Budgetable Resource

Enterprise licensing will evolve to include “reasoning compute” allocations alongside user seat counts. Organizations will purchase pools of Think Deeper compute and allocate them across teams based on analytical intensity. Expect new admin consoles for reasoning budget management by Q3 2026.

Competitors Follow Within 90 Days

Google Workspace with Gemini, Notion AI, and Slack AI face immediate pressure to expose similar controls. The absence of reasoning depth controls will become a competitive liability. By April 2026, every major productivity AI will have some form of manual reasoning mode selection.

Specialized Reasoning Modes Proliferate

Three modes is just the start. Expect Microsoft to introduce task-specific reasoning profiles: “Financial Analysis” mode optimized for numerical reasoning and spreadsheet context, “Legal Review” mode with citation verification and regulatory reference, “Technical Writing” mode with terminology consistency and format awareness. The Model Selector expands from 3 options to 10+ by end of 2026.

The Training Gap Becomes a Competitive Moat

Organizations that invest in AI reasoning fluency—teaching employees when and how to use different reasoning modes—will outperform those that don’t by measurable productivity margins. Expect consulting firms and training companies to build entire practices around “reasoning mode optimization.”

The Deeper Pattern

Microsoft’s Model Selector represents something larger than a feature update. It’s an acknowledgment that the era of AI as a black-box oracle is ending.

For the past three years, enterprise AI has operated on a simple premise: users prompt, AI responds, users accept. The intelligence of the system was entirely internal—users couldn’t see or influence how the AI processed their request. This created a learned helplessness: if the output was wrong or shallow, users had no recourse except rephrasing.

Manual reasoning mode control breaks that pattern. Users now have a lever that visibly changes system behavior. Pull the lever, get different results. This builds AI intuition in ways that black-box systems never could.

The Model Selector is crude—three modes for infinite task complexity—but it establishes the principle that users should understand and control AI processing depth. That principle, more than any specific feature, shapes what enterprise AI looks like for the next decade.

The organizations that treat AI reasoning depth as a trainable skill—not a hidden system parameter—will define the next era of knowledge work productivity.