The biggest breakthrough in robotics this year has nothing to do with robots. It’s happening in simulation—and almost nobody is paying attention.

The Real Bottleneck Nobody Talks About

Everyone’s debating AGI timelines while ignoring the actual blocker preventing physical AI from becoming ubiquitous: we can’t train robots fast enough in the real world.

Think about it. Every autonomous vehicle company, every warehouse robotics startup, every surgical robot manufacturer faces the same crushing reality. To train a robot to handle the chaos of physical environments, you need data. Mountains of it. And not just any data—you need the robot to experience edge cases, failures, unexpected scenarios, weird lighting conditions, novel objects, and a thousand other variables that only emerge when silicon meets reality.

Training physical AI traditionally requires months of real-world data collection per scenario. Months. Per scenario. Now multiply that by every scenario a delivery robot might encounter, every weather condition an autonomous truck needs to navigate, every tissue variation a surgical system must handle.

This is why robotics has remained stuck in controlled environments. Not because the algorithms aren’t good enough. Not because the hardware isn’t capable. But because the training bottleneck makes scaling economically and logistically impossible.

That constraint just disappeared.

October 2025: The Month Everything Changed

In October 2025, NVIDIA released Cosmos-Predict 2.5, and the implications are staggering. This isn’t another incremental improvement to simulation tools. It’s a unified flow-based foundation model capable of generating up to 30-second photorealistic video simulations from text, image, or video inputs—specifically designed for training physical AI systems.

Let that sink in. You can now describe an environment in text, feed in a reference image, or provide a short video clip, and the model generates photorealistic simulation data that robots can train on. The scenarios are physics-accurate. The visuals are indistinguishable from real footage. And you can generate them on demand, at scale.

World Foundation Models don’t just simulate environments—they generate infinite training grounds where a robot can experience the equivalent of 10,000 years of scenarios in a week.

Two months earlier, in August 2025, Google DeepMind announced Genie 3, their latest world model capable of generating unprecedented diversity of interactive 3D environments. While NVIDIA focused on photorealistic video generation for sim-to-real transfer, DeepMind pushed toward unlimited curriculum learning for embodied AI agents.

These aren’t competing products. They’re converging on the same paradigm shift: the realization that training physical AI doesn’t require physical environments anymore.

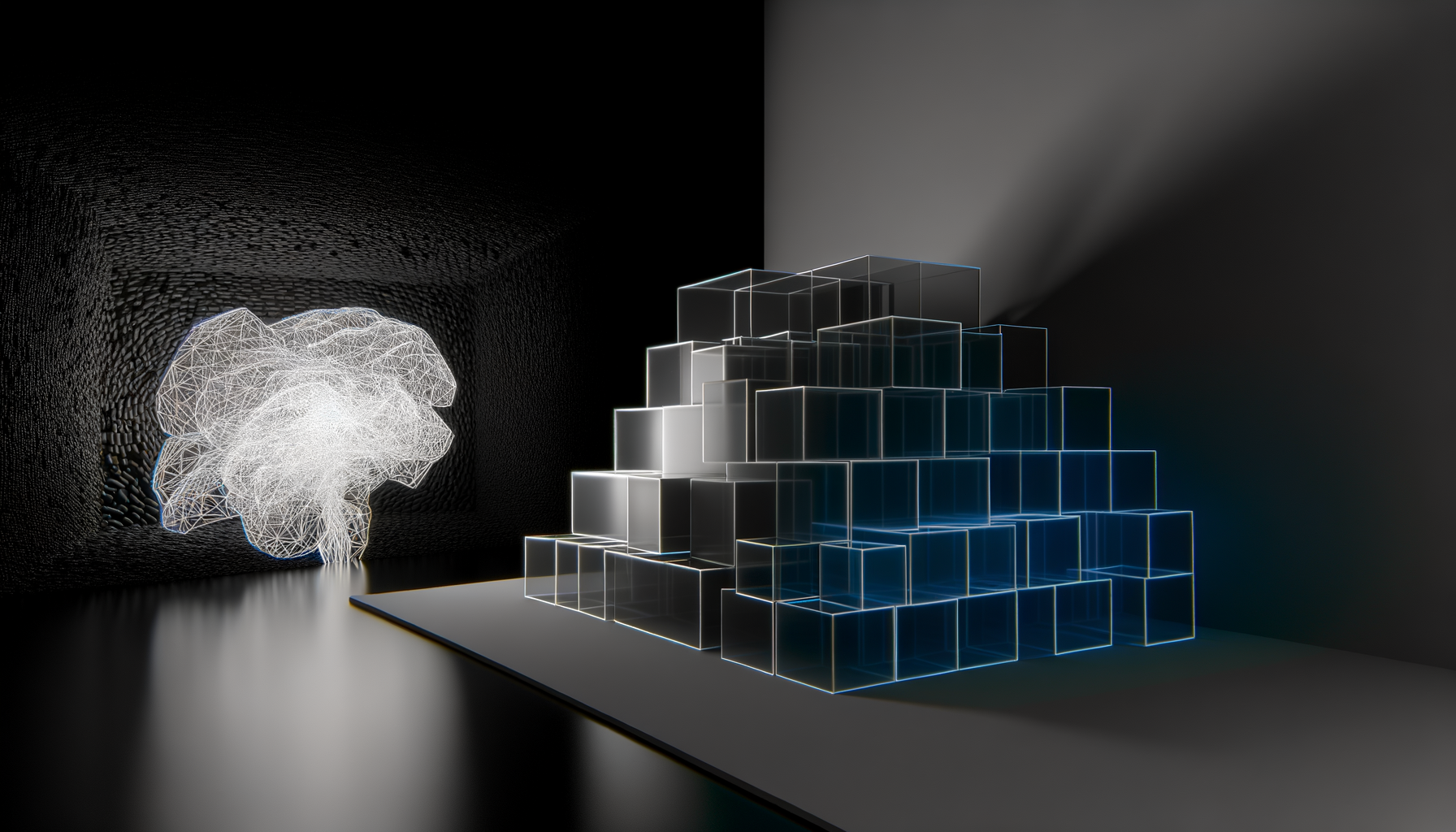

Understanding World Foundation Models

Before we dive deeper, let’s establish what World Foundation Models actually are, because the terminology matters.

World Foundation Models are large-scale generative models trained to understand and simulate how physical environments work. Unlike traditional simulators that require manual programming of physics rules and environmental parameters, WFMs learn world dynamics directly from massive datasets of real-world footage and sensor data.

The Technical Architecture

Modern WFMs like Cosmos-Predict 2.5 use diffusion and flow-based architectures that have proven superior for generating dynamic world states with accurate physics modeling. This is crucial for training robots that must interact with physical environments—the simulation needs to reflect how objects actually behave when pushed, grasped, or collided with.

The NVIDIA research paper published on arXiv in October 2025 details how these models process multimodal inputs:

- Text conditioning: Describe the scenario you want to simulate (“warehouse environment with wet floors and variable lighting, forklift approaches stacked pallets”)

- Image conditioning: Provide reference images of the environment type or specific objects the robot needs to interact with

- Video conditioning: Feed existing footage to generate variations and extensions of real scenarios

The model then generates coherent, physics-plausible video sequences that maintain spatial and temporal consistency. This isn’t just visual generation—it’s world generation.

Why Flow-Based Models Changed Everything

Previous approaches to synthetic training data suffered from a fundamental problem: the generated environments didn’t quite behave like reality. Lighting was off. Physics was approximate. Objects moved in slightly unnatural ways. These subtle discrepancies created what researchers call the “sim-to-real gap”—models trained purely in simulation failed when deployed in the real world.

Flow-based foundation models like Cosmos-Predict 2.5 address this by learning continuous transformations between states rather than discrete predictions. The result is smoother, more physically accurate simulations that dramatically reduce the sim-to-real gap.

NVIDIA’s Cosmos-Transfer 2.5 framework takes this further by achieving photorealistic Sim2Real and Real2Real transfer. The company describes it as smaller and faster than previous approaches while maintaining photorealistic output quality—meaning you can generate training data at scale without prohibitive compute costs.

The Platform Play: Infrastructure, Not Just Models

Here’s where NVIDIA’s strategy becomes particularly interesting. Cosmos isn’t just a model—it’s a platform. The full stack includes:

- World Foundation Models (the core generative engines)

- Specialized tokenizers for converting between modalities

- Guardrails for ensuring generated content is safe and appropriate

- Accelerated data pipelines for processing training data at scale

This platform approach signals that NVIDIA sees World Foundation Models as infrastructure. They’re not building a product to sell to customers. They’re building the layer that will enable an entire ecosystem of physical AI development.

Consider the implications. Today, if you’re a robotics startup, you need to:

- Build or acquire robots

- Set up physical testing environments

- Collect months of real-world data

- Train your models

- Repeat for every new scenario

With WFM infrastructure, steps 2 and 3 collapse into “generate synthetic environments.” Your bottleneck shifts from data collection to model training—a problem that scales with compute, not physical resources.

Closed-Loop Embodiment: The Training Paradigm Shift

The most significant capability WFMs unlock is what researchers call “closed-loop embodiment.” This is where autonomous agents can test policies and receive feedback in unlimited scenarios—simulated environments that react to the agent’s actions in real-time.

Closed-loop training means robots don’t just see scenarios—they interact with them, make decisions, experience consequences, and learn from failures. In simulation, you can fail a million times without breaking anything.

Consider the use cases now possible:

Warehouse Navigation

A logistics robot needs to navigate warehouses with:

- Variable lighting (fluorescent flickering, natural light through skylights, night operations)

- Dynamic obstacles (other robots, workers, dropped packages)

- Floor conditions (dust, spills, uneven surfaces)

- Shelf configurations that change daily

Previously, training for this diversity required either capturing footage in dozens of warehouses or manually programming every variable into a simulator. Now, you describe the variations you need, and the WFM generates them.

Autonomous Driving in Extreme Weather

Self-driving systems notoriously struggle with edge cases—heavy rain, snow, fog, construction zones, unusual road markings. These scenarios are dangerous and expensive to capture in reality.

WFMs can generate thousands of hours of photorealistic driving footage in conditions that would be impossible or unethical to deliberately encounter. A vehicle can experience every conceivable failure mode before touching a public road.

Surgical Robots Handling Tissue Variations

Surgical robotics requires training on tissue that varies by patient, organ, and condition. The traditional approach involves cadaver labs and limited clinical trials.

With WFMs, surgical systems can train on millions of procedural variations—different tissue densities, bleeding patterns, anatomical anomalies—all generated synthetically but grounded in real physiological data.

The Competitive Landscape: NVIDIA vs. Google DeepMind

While both NVIDIA and Google DeepMind are advancing World Foundation Models, their approaches reveal different strategic priorities.

| Aspect | NVIDIA Cosmos | Google Genie 3 |

|---|---|---|

| Primary Focus | Physical AI training infrastructure | Embodied agent curriculum learning |

| Output Format | Photorealistic video (up to 30 seconds) | Interactive 3D environments |

| Key Strength | Sim2Real transfer fidelity | Environmental diversity generation |

| Platform Strategy | Full stack (models, tokenizers, pipelines) | Research-first, integration with Google Cloud |

| Target Users | Robotics companies, AV developers, industrial automation | AI researchers, game developers, embodied AI labs |

NVIDIA’s advantage lies in their existing relationships with hardware manufacturers and robotics companies. Cosmos integrates with their GPU infrastructure, creating a seamless pipeline from simulation generation to model training to deployment on NVIDIA-powered robots.

Google’s advantage is research depth and compute scale. Genie 3’s ability to generate unprecedented diversity of interactive environments suggests they may be positioning for a future where WFMs generate entire worlds, not just training scenarios.

The Convergence Thesis

These approaches will likely converge. NVIDIA needs diversity generation to expand training coverage. Google needs photorealistic fidelity for real-world deployment. The company that cracks both first gains a significant infrastructure advantage.

2025: The Commercialization Year

The timing of these releases matters. 2025 marks the year World Foundation Models transition from academic experiments to production tools. The research papers were published. The platforms were launched. The APIs are available.

For the robotics industry, this is an inflection point comparable to the release of ImageNet for computer vision or the transformer architecture for language models. A previously limiting factor just became an abundant resource.

The question isn’t whether World Foundation Models work. It’s what happens when every robotics startup suddenly has access to unlimited, photorealistic training data.

Who Benefits First?

The immediate beneficiaries are companies with:

- Clear deployment environments: Warehouses, factories, hospitals—structured spaces where synthetic data can closely approximate reality

- Strong engineering teams: WFMs eliminate data collection but still require expertise to generate useful training curricula

- Existing robot platforms: The models are trained, but you still need hardware to deploy them

Startups that were previously blocked by data acquisition costs can now compete with larger players. The barrier shifts from resources to ingenuity—who can design the best training curricula using these new tools?

The Technical Challenges That Remain

World Foundation Models aren’t magic. Significant technical challenges remain before they can fully replace real-world training.

The Generalization Problem

WFMs are trained on existing data, which means they can only generate variations of scenarios they’ve seen. Truly novel environments—new types of objects, unprecedented situations—may still require real-world data to bootstrap.

The research community is addressing this through compositional generation, where models learn to combine elements in novel ways. But the gap between “plausible variation” and “genuine novelty” remains.

Physics Accuracy at the Margins

While WFMs achieve impressive physics simulation for common scenarios, edge cases can still produce unrealistic results. A robot trained on synthetic grasping data might fail when encountering materials with unusual friction properties or deformation characteristics.

The solution is hybrid training pipelines—using WFMs for broad scenario coverage while supplementing with targeted real-world data for physics-critical edge cases.

Compute Requirements

Generating photorealistic simulation at scale requires substantial compute. Cosmos-Predict 2.5 is optimized for efficiency, but generating thousands of hours of training footage still demands significant GPU resources.

This creates a potential centralization effect—companies with large compute budgets can generate more training data, potentially widening the gap between well-funded players and smaller competitors.

The Broader Implications: When Training Becomes Unlimited

If we take World Foundation Models to their logical conclusion, we’re looking at a fundamental restructuring of how physical AI systems are developed.

The Death of Data Moats

For years, robotics companies defended their market position through proprietary datasets. Tesla’s autonomous driving advantage came partly from billions of miles of real-world driving data. Amazon’s warehouse robots benefited from data collected across thousands of fulfillment centers.

When any company can generate equivalent training data synthetically, these moats erode. Competition shifts to other factors—hardware quality, deployment speed, regulatory relationships, brand trust.

Accelerated Safety Validation

Regulatory bodies struggle to validate autonomous systems because testing every scenario in the real world is impossible. WFMs could change this equation—generate a standardized test suite of challenging scenarios and require systems to pass before deployment.

This creates a pathway to more consistent, comprehensive safety validation than current approaches allow.

The Simulation Hypothesis Becomes Practical

Here’s where things get philosophically interesting. If we can generate photorealistic, physics-accurate worlds on demand, what does that mean for training AI systems that will eventually become more capable than humans in physical tasks?

The AI systems trained in these synthetic worlds will have experienced millions of scenarios—more than any human operator could encounter in multiple lifetimes. Their intuitions will be forged in simulation, then deployed in reality.

We’re not quite at the point of asking whether a robot might prefer its training world to the real one. But the question is less absurd than it was a year ago.

What This Means For Your Organization

If you’re working in robotics, autonomous systems, or industrial automation, the emergence of production-ready World Foundation Models requires strategic reassessment.

Immediate Actions

- Evaluate your training pipeline: Where are your current bottlenecks? If data collection is limiting iteration speed, WFMs may offer significant acceleration.

- Identify high-value synthetic scenarios: Which edge cases are currently expensive or dangerous to capture in reality? These are prime candidates for WFM generation.

- Assess compute requirements: WFM-based training requires different infrastructure than real-world data processing. Plan for GPU capacity accordingly.

Medium-Term Strategy

- Develop hybrid training curricula: The best results will likely come from combining synthetic and real data. Define what requires real-world grounding versus what can be generated.

- Build simulation-first development processes: If you can validate policies in simulation before hardware testing, development cycles compress dramatically.

- Monitor the sim-to-real gap: Track where synthetic training succeeds and fails in deployment. This data will guide future training strategy.

Long-Term Positioning

- Consider platform dependencies: Building on NVIDIA Cosmos creates efficiency but also dependency. Evaluate the strategic implications.

- Anticipate regulatory evolution: As WFMs become standard, regulators will likely develop requirements around synthetic training validation. Position for this shift.

- Watch for consolidation: If WFMs eliminate data moats, expect industry consolidation around other competitive factors. Plan accordingly.

The Road Ahead: From Training Data to Training Worlds

We’re at the beginning of a transition from generating training data to generating training worlds. The distinction matters.

Data is passive—images, videos, sensor readings that AI systems learn patterns from. Worlds are active—environments that react to agents, provide feedback, and enable closed-loop learning.

NVIDIA Cosmos and Google Genie 3 represent early steps toward a future where AI systems are born in simulation, experience millions of lifetimes of scenarios, and then enter our physical reality already expert in navigating it.

The implications extend beyond robotics. Manufacturing, logistics, healthcare, agriculture, construction—any domain where AI systems must interact with physical environments will be transformed by unlimited synthetic training.

The Timeline Question

How long until WFM-based training becomes standard? Based on current trajectories:

- 2025-2026: Early adopters integrate WFMs into existing training pipelines, demonstrating feasibility

- 2026-2027: Best practices emerge, tooling matures, smaller companies gain access

- 2027-2028: WFM-based training becomes default for new physical AI development

- 2028+: Regulatory frameworks adapt, synthetic training validation becomes standard

This timeline could compress if the sim-to-real gap closes faster than expected, or extend if fundamental limitations emerge. But the direction is clear.

The Question That Matters

Everyone in AI is asking when we’ll achieve AGI. It’s the wrong question for physical AI.

The right question is: what happens when the constraint preventing robots from scaling beyond controlled environments disappears?

We’re about to find out.

World Foundation Models from NVIDIA and Google aren’t building better simulators. They’re building the infrastructure layer that determines whether physical AI becomes ubiquitous or remains perpetually experimental.

The companies, researchers, and developers who recognize this shift—and position accordingly—will define the next decade of robotics. Those who don’t will find themselves training on real-world data while competitors iterate thousands of times faster in simulation.

The training bottleneck is gone. The race to deploy physical AI just accelerated.

World Foundation Models have transformed physical AI’s fundamental constraint from data scarcity to imagination—the only limit now is what scenarios you can conceive to train on.